AnimeLSTM

2020-05-17 · 4 min readA project where I generated my own anime music using AI!

Example Anime Music AI Clip:

So this week I decided to get down and finally create a cool project with AI! After thinking about something that would be cool to make, I decided to try and make something that I really like, anime music. This wasn't completely new territory for me as I have experience writing neural nets, and working with time series data, and had an idea of how to approach it.

For more in depth comments and understanding, I recommend reading my Jupyter Notebook on GitHub

Data Gathering

Before I started to build the neural network, I decided to look around to see if there were already any good anime music datasets on Kaggle, and lo and behold, there were none. So I decided to think about what data would be suitable for a project like this. I laid out my criteria:

- Has to be digital, not analog. This is because neural networks won't always be 100% accurate, and it would be very hard to output a sound wave that sounds decent, vs digital data would be easier to work with and way more forgiving.

- I would need good anime music for the model to learn off of.

- The data type would need to be general and known wide enough so that not as well known anime songs would have to be transcribed in it

After looking at all of that, I decided that the best media format would be MIDI files in a piano format, because it is digital, easy to use, and many people have already converted anime songs to MIDI files. Now the main problem here was scraping data. Though it's not in the repository, I built a web scraper (keep in mind it wasn't a spider because I wanted to curate every song), and managed to get about 130 songs!

These can be found on GitHub or on Kaggle. Next came parsing and training the model.

Data Parsing & Training

Lucky for me, there was already a MIDI file parser in python (music21 curated by MIT), so I was easily able to parse the files and get the notes. After that, I decided to take the routes one at a time.

- For v1 I decided to only scrape the files for notes and chords, that way it would be easy to test if it had a possibility of sounding good.

- Next for v2 I decided to scrape the rests as well as the notes and chords, increase the amount of data (previously 88), and push back the look-back (will get to that later) to 200.

- Lastly for v3, I decided to go all in with including tempo, keys, and key signatures. This ended up increasing the amount of notes significantly which affected the neural network architecture.

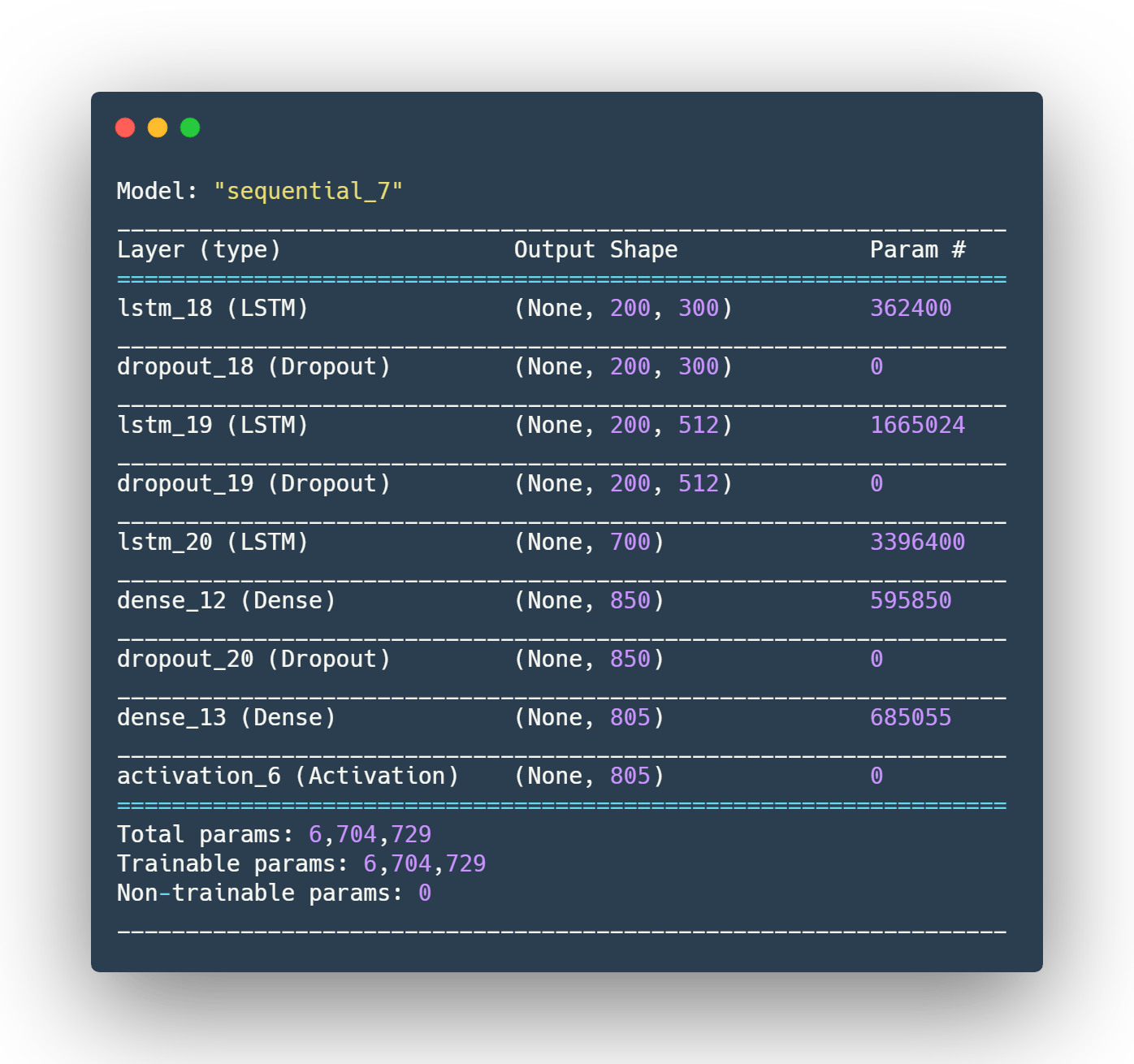

After taking all of those routes, I started to train my neural network. The way I envisioned a person to do this, was take a look at the past notes, and infer what the song should be. To do this, I decided to use LSTM layers (Long-Short-Term-Memory) which basically store and cycle through past values. I also inputted 100 of the past notes (upped to 200 in v2 and v3), to get 1 note out, which is what I suspected would be the easiest for a neural network to do (because it would be feasible for a human to do). For my actual neural network architecture, I'll put an image below.

Results

I bet now you're just like, SHOW ME THE MONEY!!! So here are some samples (more can me found on the GitHub or on the Google Drive)

Version 1

Version 2

Version 3

Conclusion

In short, this was a really fun experiment where I learned a ton about LSTM networks, and music encoding. Technically, you could run and train this on other MIDI file collections (gaming, classical, hip-hop, etc) music, so I might try to make Undertale AI generated music in the future ( ͡° ͜ʖ ͡°). You can check out the GitHub at https://github.com/neelr/AnimeLSTM, to take a look at the source code! What I want to do in the future (and will link to if done) is make a 24/7 music stream of AI generated music on YouTube so I can easily just play and enjoy some music whenever I'm studying. Leave a star if you enjoyed the music, and thanks so much for reading to the end, and feel free to contact me at neel.redkar@outlook.com!

Update!

I visualized and created a 1 hour YouTube video to listen too!